Authors: Ze Shen Chin and Koen Holtman

This white paper describes what we think is a possible approach in classifying GPAI model providers and determining their obligations. We may expand on these ideas in the future.

Editor’s update: The European Commission published its guidelines on the scope of obligations for providers of general-purpose AI models under the AI Act on July 18th, 2025. In response, we provided an analysis of these guidelines.

The authors thank Kathrin Gardhouse and Arturs Kanepajs for helpful comments and feedback – any remaining mistakes are of course our own, and it should not be assumed that this white paper represents the opinions of the reviewers.

Summary

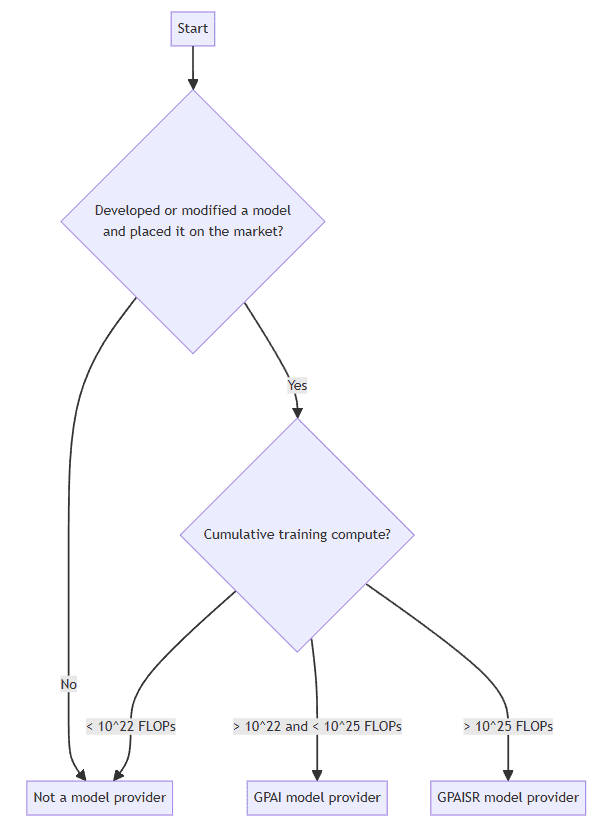

We propose a simple classification scheme for AI models to be placed in three categories: not a GPAI model, GPAI model, and GPAI model with systemic risk. We also propose a simple definition of a provider, where the entity that developed or modified and first places a model (or a model version) on the market would be considered a provider of this model.

The flowchart below illustrates the conditions which we propose to be used to presume an entity as a GPAI / GPAISR model provider.

In all cases above, as per Recital 109 of the AI Act, if a provider only did modifications or fine-tuning to an original model that has previously fulfilled its obligations to the AI Act, the current obligations of this provider are limited to those modifications or fine-tuning only.

The table below summarizes the three types of provider designations and their obligations.

| Model type | Principle | Compute criteria for presumption | Obligations for providers |

|---|---|---|---|

| Not a GPAI model | – | Cumulative training compute < 10^22 FLOPs | None |

| GPAI model | Article 3(63) “displays significant generality and is capable of competently performing a wide range of distinct task” | Cumulative training compute > 10^22 FLOPs and < 10^25 FLOPs | Article 53 (excluding Annex XI Section 2) and Article 54. |

| GPAI model with systemic risk | Article 3(65) A model with risks “that is specific to the high-impact capabilities of general-purpose AI models, having a significant impact on the Union market due to their reach, or due to actual or reasonably foreseeable negative effects on public health, safety, public security, fundamental rights, or the society as a whole, that can be propagated at scale across the value chain;” | Cumulative training compute > 10^25 FLOPs (Article 51(2)) | All of the above, and Article 52 and 55 |

The main differences between our proposal and the current draft guidelines by the AI Office are:

- We use cumulative training compute as the sole criterion to presume if a model is a general-purpose AI model, and do not consider other factors like modality

- Where exceptions apply, the other factors like modality and task generality are to be taken into account

- We do not use the concept of ⅓ compute threshold on model modifications as a criteria to designate an entity as a model provider

- We do not differentiate between distinct models and model versions

- The AI Act only carries the concept of “new model” as per Recital 97, and the obligations of providers can be sufficiently clarified without introducing new concepts like distinct models and model versions.

- We regard any model modifications to result in a new model, with exceptions.

- These exceptions include fine-tuning within the scope of the existing documentation.

The concept of a model

AI models can be components of AI systems, but are not themselves AI systems.

Recital 97 of the AI Act states:

Although AI models are essential components of AI systems, they do not constitute AI systems on their own. AI models require the addition of further components, such as for example a user interface, to become AI systems. AI models are typically integrated into and form part of AI systems.

The Singapore Consensus on Global AI Safety Research Priorities also uses a similar definition:

AI model: A computer program, often automatically created by learning from data, designed to process inputs and generate outputs. AI models can perform tasks such as prediction, classification, decision-making, or generation, forming the engine of AI systems.

AI System: An integrated setup that combines one or more AI models with other components, such as user interfaces or content filters, to produce an application that users can interact with.

For an AI system that includes a model as a component, it is often possible to draw different boundary lines between where the model as a component ends, and where the rest of the system (or the other system components) begin. This can create ambiguities, leading to differences in how different parties in a model/system value chain might interpret their obligations to each other and under the Act. To eliminate ambiguities and problems of miscommunication, we propose as a general principle that the provider of a new model should unilaterally and pre-emptively define in their documentation exactly what they consider the boundaries of their new model to be. For example, a first provider of a GPAI model may say that only the weights are their model, and additional components needed to use the model, to obtain model outputs from model inputs, should be considered system components outside of the model. A second provider might say that their model consists of both weights and several other bundled software components that together with the weights, define the input-output behavior of the model. These other bundled components might be safety components, for example input or output filters, that mitigate certain risks.

We believe that, if a provider does not give a clear definition of their GPAI model boundary in their documentation, that it is then reasonable for the AI Office to check and enforce compliance based on unilaterally assuming that the weights alone are the model. It would also be reasonable for downstream model users or modifiers to make the same assumption.

If a downstream modifier takes an existing upstream model with certain defined boundaries and changes the operation of components within these defined model boundaries, then the downstream modifier is presumed to have modified the upstream model, even if this modification did not touch any provided model weights.

A model modifier may conceivably change the boundaries of a model that they modify, by adding or removing components within the model boundaries. If so, this creates a new model and they should clearly document the location of the new boundaries.

Our approach above does not contradict with the original definitions in Article 3(1) and 3(63) of the Act, or with the recitals.

The concept of a provider

A provider is an entity who satisfies Articles 3(3), 3(9), and 3(10) of the AI Act:

Article 3(3): ‘provider’ means a natural or legal person, public authority, agency or other body that develops an AI system or a general-purpose AI model or that has an AI system or a general-purpose AI model developed and places it on the market or puts the AI system into service under its own name or trademark, whether for payment or free of charge;

Article 3(9): ‘placing on the market’ means the first making available of an AI system or a general-purpose AI model on the Union market;

Article 3(10): ‘making available on the market’ means the supply of an AI system or a general-purpose AI model for distribution or use on the Union market in the course of a commercial activity, whether in return for payment or free of charge;

Article 3(11): ‘putting into service’ means the supply of an AI system for first use directly to the deployer or for own use in the Union for its intended purpose;

The above definitions are indented below for clarity:

Article 3(3):

- ‘provider’ means

- a natural or legal person,

- public authority,

- agency

- or other body

- that

- develops an AI system or a general-purpose AI model

- or that has an AI system or a general-purpose AI model developed

- and

- places it on the market

- or puts the AI system into service under its own name or trademark,

- whether for payment or free of charge;

Article 3(9):

- ‘placing on the market’ means

- the first making available of an AI system or a general-purpose AI model on the Union market;

Article 3(10):

- ‘making available on the market’ means

- the supply of an AI system or a general-purpose AI model for distribution or use on the Union market in the course of a commercial activity,

- whether in return for payment or free of charge;

Article 3(11):

- ‘putting into service’ means

- the supply of an AI system for first use directly to the deployer or for own use in the Union for its intended purpose;

Article 3(3) states that either one of two conditions below must be fulfilled in order to be classified as a provider:

- Places it [AI system or general-purpose AI model] on the market

- Puts the AI system into service

The first criteria above is straightforward – an entity is presumed as provider of a general-purpose AI model if it develops or modifies and places it on the market, i.e. first makes it available on the Union market, i.e. supply it for distribution or use on the Union market in the course of a commercial activity.

The term ‘first’ here is crucial. This means that if a model already exists on the Union market, an entity’s subsequent placing of the same model in the market does not qualify it as a provider, as the placing on the market is not being done for the first time. Therefore, resellers and distributors of a particular model already placed on the market cannot be considered providers of the said model.

The second criteria above seem to only concern AI systems, also as per Article 3(11)’s definition of ‘putting into service’ specifically mentioning ‘the supply of an AI system’. However, Recital 97 of the AI Act states:

When the provider of a general-purpose AI model integrates an own model into its own AI system that is made available on the market or put into service, that model should be considered to be placed on the market and, therefore, the obligations in this Regulation for models should continue to apply in addition to those for AI systems.

This implies that the activity of putting an AI system into service may also constitute placing the AI model on the market, and this is applicable where the AI system contains the provider’s ‘own model’. This leads to a similar conclusion as the first criteria, where resellers and distributors of a particular model already placed on the market cannot be considered providers of the said model, as the model is not their ‘own model’. However, if an entity makes modifications to a model developed by another entity, this modified model may now be deemed as an ‘own model’, where integrating this model into an AI system and putting the system into service would constitute placing the model on the market. We also propose that this model is deemed as a ‘new model’ which will be discussed in the next section of this paper.

Separate from a GPAI model provider, the AI Act also defines and discusses the role of a downstream provider in Article 3(68):

‘downstream provider’ means a provider of an AI system, including a general-purpose AI system, which integrates an AI model, regardless of whether the AI model is provided by themselves and vertically integrated or provided by another entity based on contractual relations.

In contrast to a model provider, the AI Act applies no obligations to the downstream provider. In fact, the only mention of a downstream provider in the Articles is in the context of having power to lodge complaints on the (upstream) model provider, as per Article 89.

The concept of model modifications

We propose that any modifications to a model (i.e. its weights) whatsoever leads to a new model, and it may no longer be considered the same model as before.

This is consistent with Recital 97 of the AI Act:

These models [general-purpose AI models] may be further modified or fine-tuned into new models.

We propose that if any amount of modifications is done to a model, the presumption should be that this is no longer the same exact model, so it is a different model that can now be placed on the market for the first time. With this logic, an entity that places this new model on the market can now be considered a provider, according to Article 3(3). However, we will also consider some exceptions to this somewhat extreme presumption.

One reason for this seemingly extreme stance is that especially for GPAISR, small modifications to the model can lead to a significantly different risk profile, which we believe warrants additional risk assessment and mitigations. For example, the Badllama 3 paper by Volkov 2024 (https://arxiv.org/abs/2407.01376) details a method for removing safety fine-tuning from a medium-sized frontier model (LLaMa 3 70b) in around half an hour of training on an A-100, around 6×10¹³ FLOP, compared to the ~10²⁵ FLOP needed for training it. The fine-tuning methods in Badllama 3 don’t reduce the capabilities of models. Additionally, Arditi et al. 2024 (https://arxiv.org/pdf/2406.11717) find that simple techniques such as activation addition (https://arxiv.org/pdf/2308.10248) can remove safety refusals from LLama 3 70b with a single steering vector, drastically reducing safety scores while retaining capabilities. Based on our calculations, such a steering vector can be computed with ~10¹¹ to 10¹² FLOP (20 token prompt, 2-3 operations per input token and parameter, 70 billion parameters, in total a few trillions of FLOP).

The exception to this presumption can apply where fine-tuning to the model is done by the original provider who developed the model, within the stipulated scope of anticipated fine-tuning as stated in the original technical documentation when the model was placed on the market, where the scope of fine-tuning neither uses additional types of training data or training methods beyond the original technical documentation, nor is expected to change the risk profile of the resultant model based on the risk assessment and mitigation performed (for GPAI models with systemic risk). For example, if the technical documentation stipulates that the model will be regularly updated with more recent data of the stated data types, e.g. news articles; or where a particular type of fine-tuning is to be performed continuously as a risk mitigation measure, e.g. fine-tuning against more recent jailbreaking techniques; then a model which has undergone these fine-tuning do not constitute a new model.

We argue that any modification beyond this exception (e.g. modifications made not by the original provider) should be treated as producing a new model. That being said, if a downstream modifier makes modifications that are anticipated and considered safe by the original provider, then that downstream new model provider should usually be able to validly conclude that they would not need to do much at all in order to comply with their provider obligations as defined in the act, because they will be able to simply leverage work already done upstream. We will discuss this point further in the subsequent sections.

Model classification

There are three categories for models:

- Not a GPAI model

- GPAI model

- GPAI model with systemic risk

For providers of such models, their obligations are:

- No obligations

- Article 53 and 54

- All of the above, and Article 52 and 55

Their criteria for being presumed to be a model of that category is based on a cumulative training compute of:

- < 10^22 FLOPs

- > 10^22 FLOPs and < 10^25 FLOPs

- > 10^25 FLOPs

Where exceptions may apply on a case-by-case basis.

These exceptions may include several factors. One of them is modality, where it may be argued that models only capable of generating audio or video currently do not meet the AI Act’s definition of “displaying significant generality”. The other one is task generality, where if it can be demonstrated that a particular model is incapable of performing any tasks beyond a narrow domain, it would not meet the AI Act’s definition of “capable of competently performing a wide range of distinct tasks”. The AI Office can approve these exceptions.

The concept of cumulative training compute is also clearly described in Recital 111 of the AI Act:

The cumulative amount of computation used for training includes the computation used across the activities and methods that are intended to enhance the capabilities of the model prior to deployment, such as pre-training, synthetic data generation and fine-tuning.

By this logic, if a model has been developed using X FLOPs, and subsequently modified using Y FLOPs, it will have a cumulative training compute of X+Y FLOPs. We propose that the determination on whether this model is presumed to be a GPAI model (or GPAISR model) should solely be based on what the value of X+Y is. Any subsequent modifications using Z FLOPs will similarly apply the same addition, where the cumulative training compute will then be X+Y+Z.

Obligations of providers

With our current proposal, it is possible that there would then be a large number of model providers, if many entities perform modifications on an existing model prior to placing it on the market.

We believe this is perfectly fine, as the providers will have obligations that are proportional to the extent of the modifications made. This logic is described in Recital 109 of the AI Act:

In the case of a modification or fine-tuning of a model, the obligations for providers of general-purpose AI models should be limited to that modification or fine-tuning, for example by complementing the already existing technical documentation with information on the modifications, including new training data sources, as a means to comply with the value chain obligations provided in this Regulation.

For example, if entity A develops and places a GPAI model G (based on cumulative compute threshold) on the market, entity A will be designated as a GPAI model provider, and will have to perform the relevant obligations of Articles 53 and 54. Subsequently, if entity B obtains this model and performs modifications M, and the model remains classified as a GPAI model, the obligations that entity B is subject to is only limited to that related to modifications M. Entity B will not be required to perform obligations related to the original model G.

This logic is similar to the logic of ‘safe originator models’ and ‘safely derived models’ in the third draft of the Code of Practice for GPAI model. It states that “where it can reasonably be assumed that the safely derived model has the same systemic risk profile as, or a lower systemic risk profile than, the safe originator model”, information from systemic risk analysis of the originator model shall be accounted for, with the example given of “using the results of model evaluations conducted for the safe originator model, instead of conducting the same model evaluations again for the safely derived model”.

Therefore, if an entity makes minor modifications to a model that has already been placed on the market, its additional obligations will be very minor, as limited to the extent of the modifications only.

To summarize, the following are four exhaustive examples of providers and their obligations:

- A provider develops a model (or has a model developed) from scratch.

- Its obligations will be the full extent of Articles 53-54 for GPAI models or Articles 52-55 for GPAI models with systemic risk.

- A provider modifies a model that has not previously been classified as either GPAI model or GPAI model with systemic risks, either because it did not meet the threshold of cumulative training compute or it was not placed on the market.

- Its obligations will be the full extent of Articles 53-54 for GPAI models or Articles 52-55 for GPAI models with systemic risk.

- A provider modifies a model previously classified as GPAI model or GPAI model with systemic risks, where the entity who previously developed the model has fulfilled its relevant obligations. The modifications do not result in a cumulative training compute that presumes the model to be in a different category.

- Its obligations will be Articles 53-54 for GPAI models or Articles 52-55 for GPAI models with systemic risk, but limited only to the extent of the modifications only.

- A provider modifies a model previously classified as GPAI model, where the entity who previously developed the model has fulfilled its relevant obligations (Articles 53-54). The modifications result in a cumulative training compute that presumes the model to be a GPAI model with systemic risk.

- Its obligations will be Articles 53-54, limited only to the extent of the modifications only; as well as the full extent of Articles 52 and 55 (as these obligations have not been previously fulfilled).

In any case, the obligations of a provider are always limited to the ‘delta’ of the obligations fulfilled by the previous model on which the modifications are based, where applicable.

Appendix

Other relevant topics not discussed in this paper

A GPAI model provider should have in place an acceptable use policy, as per Annex XII of the AI Act. This policy may include guidance on the types of modifications to the model that are considered acceptable, or recommended actions to be taken following certain types of modification, e.g. to perform additional risk assessment or mitigation measures. When another entity takes this model and performs modifications on it, the modifications are supposed to be in line with the acceptable use policy.

Some providers of full open-source or partially open models may refrain from drawing up an acceptable use policy, or refrain from drawing up or enforcing contractual obligations that bind downstream modifiers to this policy. Such providers may instead bundle safety documentation that contains (non-binding) guidance related to safety.

It is currently unclear how the AI Act will be interpreted by the AI Office in case there is an entity who violates the acceptable use policy, or ignores safety documentation bundled with the model. It may be argued that where an entity makes modifications to the model beyond the scope of the acceptable use policy, their obligations will no longer be “limited to that modification or fine-tuning” as per Recital 109 of the AI Act. In this situation, there is an argument to be made that the “already existing technical documentation” are no longer valid, and they will be subject to the full obligations of Articles 53-54 (for GPAI models) or Articles 52-55 (for GPAI models with systemic risk) of the AI Act.

In the case where an entity modifies a model within the acceptable use policy or respecting all safety documentation, which then unfortunately leads to a serious incident anyway, it is somewhat unclear whether the full responsibility and/or liability lies with the entity that modified the model or the entity that originally developed the model prior to its modification. We recommend that the AI Office provide additional guidance to provide clarity.

As a general principle, creating a safe value chain requires that ambiguities about which party has what safety related responsibilities, and what party will be liable for what if things go wrong, is absolutely avoided. We believe that the AI office guidance should encourage the drawing up of licenses or other contracts across the value chain that clearly allocate responsibilities and that clearly define the presence or absence of mutual indemnification arrangements and mutual assistance arrangements. With some GPAISR model providers being companies with a lot market power, these companies may have a legitimate concern that a court may end up voiding some indemnification clauses in their contracts, clauses where downstream users of the (modified or unmodified) model indemnify them for damages in case that license conditions or acceptable use policies are violated, void such a clause because it is considered to be an unreasonable abuse of their market power. We would like to see the AI Office provide some guidance saying that certain types of safety related indemnification clauses would be fine. At the same time, the office should also clarify that, in the context of upstream providers having a responsibility to know and police their downstream value chain, and putting indemnification clauses in place can be seen as a useful measure to keep their value chain more honest, but this useful measure is not necessarily always as a sufficient measure.

Relevant definitions from the EU AI Act

Article 3(1): ‘AI system’ means a machine-based system that is designed to operate with varying levels of autonomy and that may exhibit adaptiveness after deployment, and that, for explicit or implicit objectives, infers, from the input it receives, how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments;

Article 3(3): ‘provider’ means a natural or legal person, public authority, agency or other body that develops an AI system or a general-purpose AI model or that has an AI system or a general-purpose AI model developed and places it on the market or puts the AI system into service under its own name or trademark, whether for payment or free of charge;

Article 3(9): ‘placing on the market’ means the first making available of an AI system or a general-purpose AI model on the Union market;

Article 3(10): ‘making available on the market’ means the supply of an AI system or a general-purpose AI model for distribution or use on the Union market in the course of a commercial activity, whether in return for payment or free of charge;

Article 3(11): ‘putting into service’ means the supply of an AI system for first use directly to the deployer or for own use in the Union for its intended purpose;

Article 3(63): ‘general-purpose AI model’ means an AI model, including where such an AI model is trained with a large amount of data using self-supervision at scale, that displays significant generality and is capable of competently performing a wide range of distinct tasks regardless of the way the model is placed on the market and that can be integrated into a variety of downstream systems or applications, except AI models that are used for research, development or prototyping activities before they are placed on the market;

Article 3(68): ‘downstream provider’ means a provider of an AI system, including a general-purpose AI system, which integrates an AI model, regardless of whether the AI model is provided by themselves and vertically integrated or provided by another entity based on contractual relations.

Recital 97: […] AI models require the addition of further components, such as for example a user interface, to become AI systems. […] When the provider of a general-purpose AI model integrates an own model into its own AI system that is made available on the market or put into service, that model should be considered to be placed on the market […]